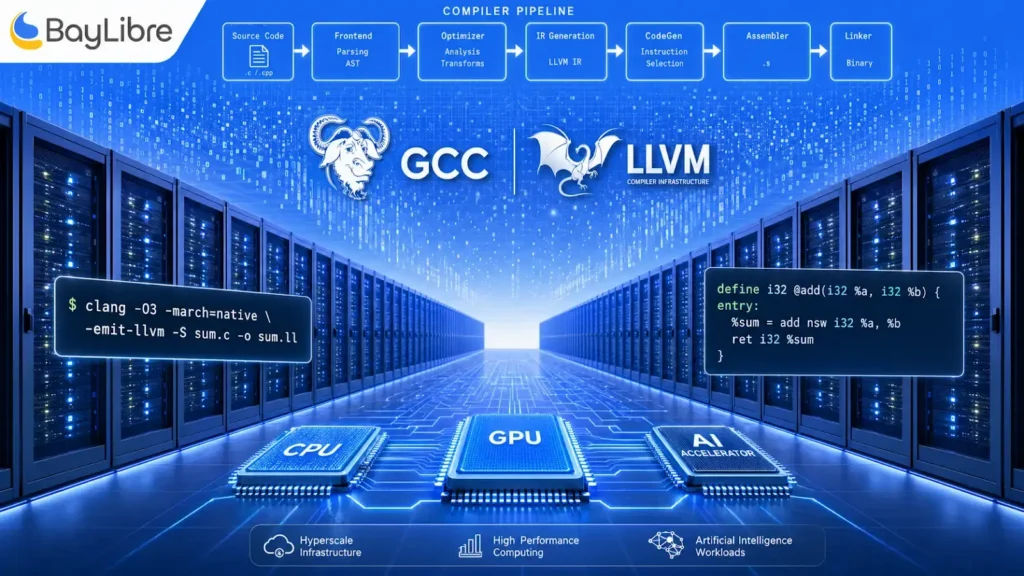

Compiler & Toolchain Engineering for Hyperscale, HPC, and AI Infrastructure

GCC, LLVM, and MLIR expertise for teams building on heterogeneous CPU, GPU, and AI accelerator architectures.

BayLibre helps hyperscale, HPC, and AI infrastructure teams unlock performance and portability through advanced compiler and toolchain expertise, aligned with modern heterogeneous hardware.

Extracting performance from complex, heterogeneous architectures (CPU, GPU, AI accelerators)

Adapting compilers to new instruction sets and custom hardware features

Managing fragmentation between internal toolchain forks and upstream evolution

Ensuring long-term performance portability across hardware generations

Our expertise & services in this industry

BayLibre works deep in the compiler and toolchain stack, helping customers design, extend, and optimize compilation flows tailored to their hardware. Our expertise spans GCC, LLVM, and MLIR, from front-end language support to back-end code generation and optimization passes.

We assist with performance tuning, vectorization, accelerator offloading, and compiler–runtime interactions, ensuring software stacks fully exploit hardware capabilities while remaining maintainable.

Open-source & ecosystem alignment

Our work is tightly aligned with upstream compiler ecosystems. By contributing directly to GCC, LLVM, and MLIR, we help customers avoid long-lived forks, influence roadmap decisions, and ensure their optimizations remain compatible with future compiler releases. This upstream-first approach is critical for sustaining performance gains over time.

Contact us!

Our expert team in the US or Europe will contact you within one business day to schedule a technical discovery and kick things off.